Stop Guessing, Start Knowing: The Importance of Exam Diagnostics in 2026

The final bell rings, and as the learners file out, a familiar weight settles on your shoulders. It’s not just your satchel heavy with books; it’s the tower of marked exam papers on your desk. You’ve spent hours, perhaps days, meticulously marking each script. You’ve calculated the class average, identified the top performers, and noted those who are struggling. You have a feeling you know what went wrong. “They just didn’t understand Photosynthesis,” you might think, or “The application questions were too difficult.”

But what if that "gut feeling" is incomplete? What if the problem wasn't the entire topic of Photosynthesis, but specifically their inability to interpret data from a graph about light intensity? What if the issue with the application questions was that they were all clustered in one challenging section, causing cognitive overload?

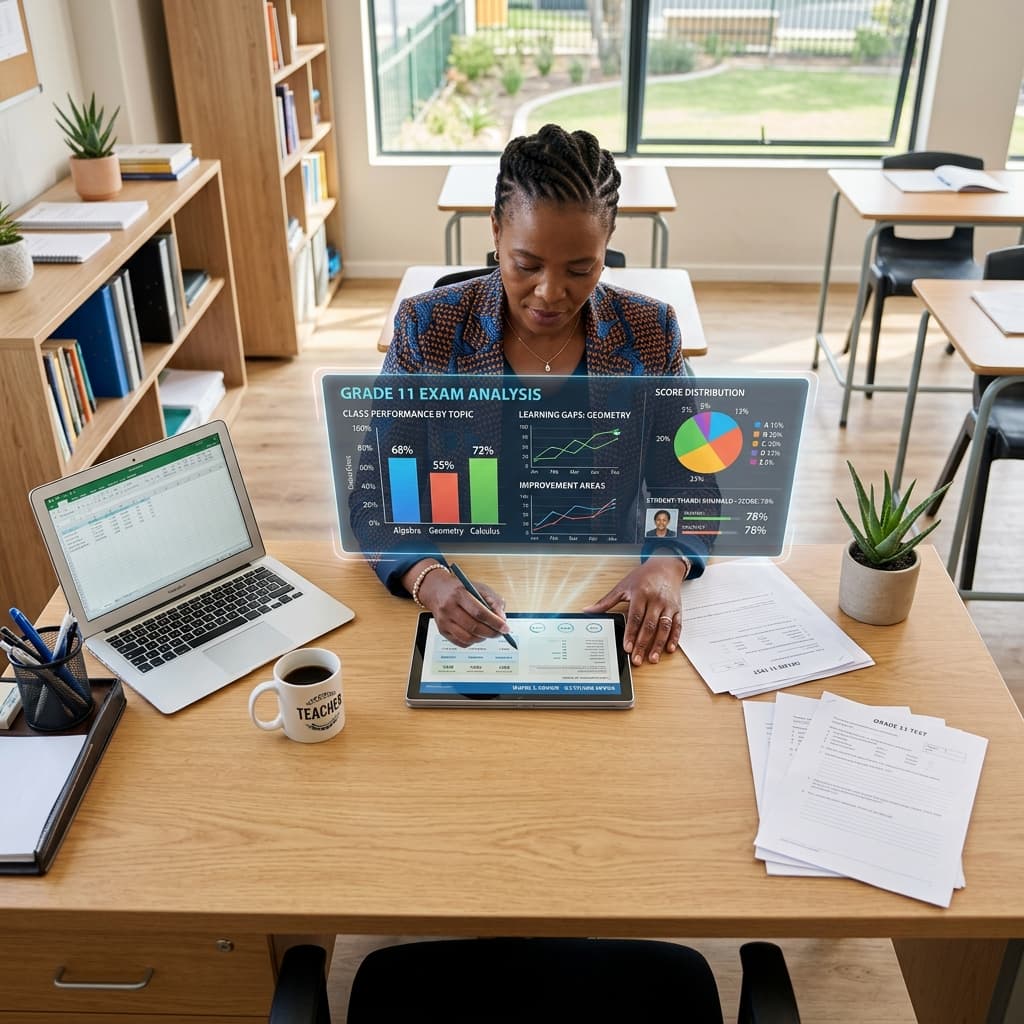

For too long, South African teachers, Heads of Department (HODs), and School Management Teams (SMTs) have relied on surface-level analysis. We’ve been operating with a blurry photograph when we need a high-resolution blueprint. As we look towards 2026, in an educational landscape demanding greater accountability and improved outcomes, this approach is no longer sustainable. The era of educational guesswork is over. The era of precision, data-driven insight has begun, and its cornerstone is exam diagnostic analysis.

This comprehensive guide will unpack the critical importance of deep exam diagnostics within the South African CAPS framework, highlighting how moving beyond the mark sheet can revolutionise your teaching, departmental management, and overall school improvement.

The Old Way: The Post-Mortem and Its Pitfalls

Traditionally, post-assessment analysis has been a reactive, time-consuming, and often superficial process. It typically involves:

- Marking: The primary focus is on assigning a score to each answer.

- Calculating Averages: Determining the class mean, median, and mode.

- Basic Item Analysis: Perhaps noting that "Question 7 was a problem for most learners."

- A General "Post-Mortem" Discussion: A brief chat in a departmental meeting about what "worked" and what "didn't."

While better than nothing, this approach is deeply flawed. It's like a doctor declaring a patient unwell based only on their temperature. It tells you that there is a problem, but it gives you almost no information about the why or the what next. It fails to identify the nuanced student learning gaps and provides no clear, actionable path for intervention. It’s an autopsy, not a diagnosis.

What is True Exam Diagnostic Analysis in the South African CAPS Context?

True exam diagnostics is the systematic process of deconstructing an assessment and its results to understand three critical dimensions: curriculum alignment, cognitive demand, and learner performance patterns. It’s an investigative tool that transforms a simple test into a rich source of data, providing a clear roadmap for targeted intervention and instructional improvement.

To be effective in South Africa, this analysis must be deeply rooted in the requirements of the Curriculum and Assessment Policy Statement (CAPS). Let's break down the three pillars of a robust diagnostic analysis.

Pillar 1: Curriculum Coverage and Weighting (The 'What')

The first question a diagnostic analysis must answer is: "Did the assessment accurately reflect what was meant to be taught and assessed?" This goes far beyond a simple checklist.

- CAPS Topic Coverage: Does the exam cover the prescribed topics for the term as outlined in the Annual Teaching Plan (ATP)? It's surprisingly easy for an exam to inadvertently over-assess one topic while completely neglecting another. This is known as topic drift.

- Weighting: Does the mark allocation for each topic align with the time and emphasis prescribed in the CAPS document? If 40% of teaching time was spent on Genetics, but it only constitutes 15% of the exam marks, the assessment is not a valid measure of the term's work. A proper diagnostic analysis quantifies this, exposing any imbalances.

Without this foundational check, you might wrongly conclude that learners are weak in a certain topic, when in reality, they were simply not assessed on it fairly or comprehensively.

Pillar 2: Cognitive Demand (The 'How')

This is where many manual analyses fall short. The CAPS document is explicit about the required cognitive levels for assessments, typically based on a revised Bloom's Taxonomy. For example, a FET (Further Education and Training) phase examination often requires a specific split, such as:

- Lower Order (Knowledge & Comprehension): 40%

- Middle Order (Application & Analysis): 40%

- Higher Order (Synthesis & Evaluation): 20%

A "gut feel" assessment of cognitive levels is notoriously unreliable. A question that seems like "Application" to the setter might, in fact, only require "Comprehension." A robust exam diagnostic tool will systematically classify each question according to Bloom's Taxonomy and calculate the exact percentages.

Why is this critical?

- An exam skewed towards lower-order questions creates a false sense of security. Learners may achieve high marks but will be utterly unprepared for the cognitive demands of their final Grade 12 examinations or tertiary studies.

- An exam with too many higher-order questions without sufficient scaffolding can lead to mass failure and demoralisation, providing little useful data on what learners actually do know.

A proper CAPS assessment is one that is balanced not just in content, but in cognitive challenge.

Pillar 3: Granular Learner Performance (The 'Who' and 'Why')

This is the pillar that directly uncovers student learning gaps. It moves beyond the total score of a learner to analyse how they achieved that score. A powerful diagnostic analysis answers questions like:

- Which specific questions did most learners get wrong?

- Was the error related to the topic (e.g., Trigonometry) or the cognitive skill (e.g., Analysis)?

- Did learners from a specific previous teacher's class all struggle with the same foundational concept?

- Are high-achieving learners acing the lower-order questions but failing the higher-order ones, indicating a ceiling in their critical thinking skills?

- Do learners consistently lose marks on questions that require them to "evaluate," "compare," or "justify"?

By cross-referencing learner performance with the topic and cognitive level of each question, you can pinpoint the epicentre of the problem. You can differentiate between a content knowledge gap and a cognitive skill gap. This distinction is the key to effective intervention.

The Manual Nightmare: Why True Diagnostics Are Rarely Done

If this level of analysis is so powerful, why isn't every school in South Africa doing it for every single assessment? The answer is simple: time.

To perform a manual diagnostic analysis as described above, a teacher or HOD would need to:

- Create a complex spreadsheet.

- Manually re-read every question in the exam paper.

- Tag each question with its corresponding CAPS topic and sub-topic.

- Debate and assign a Bloom's Taxonomy level to each question.

- Calculate the weighting of topics and cognitive levels to check for compliance.

- Enter every single learner's mark for every single question into the spreadsheet.

- Create pivot tables and formulas to analyse the data.

- Attempt to visualise the findings in a way that is easy to understand.

This is not a task of hours; it's a task of days. For an HOD managing multiple teachers and subjects, it's an administrative impossibility. The sheer workload means this crucial process is often skipped entirely, leaving teachers to rely on that same old "gut feel."

The 2026 Solution: SA Teachers' Automated Exam Diagnostic Tool

The demands of modern education require modern solutions. The manual process is broken, but the need for deep analysis is greater than ever. This is precisely the gap that SA Teachers fills with its revolutionary, automated Exam Diagnostic tool.

This tool is not just a digital spreadsheet; it's a powerful analytical partner designed specifically for the realities of the South African classroom. It automates the entire painstaking moderation and diagnostic process, turning days of work into minutes of insight.

From Tedious Task to Effortless Insight: How It Works

The SA Teachers platform has streamlined exam diagnostics into a simple, intuitive process:

- Upload Your Assessment: Simply upload your question paper and memorandum. This can be a Word document, a PDF, or even just pasted text.

- AI-Powered Analysis: The tool’s intelligent engine instantly gets to work. It reads and understands the context of your questions.

- Receive Your Report: Within minutes, you receive a comprehensive diagnostic report that provides clear, actionable data.

This report is the key to unlocking educational intelligence. It doesn't just give you numbers; it gives you answers.

Key Features That End the Guesswork Forever

The SA Teachers Exam Diagnostic tool is built to address the specific pillars of analysis required by the CAPS curriculum.

- Automated CAPS Coverage Verification: The tool cross-references your assessment against the curriculum framework, flagging any gaps or areas of over-assessment. It instantly identifies topic drift, ensuring your exams are a fair and valid reflection of the term's work.

- Instant Bloom's Taxonomy Analysis: Say goodbye to subjective debates about cognitive levels. The tool automatically classifies each question (Knowledge, Comprehension, Application, Analysis, Synthesis, Evaluation) and presents you with a precise breakdown. You can see instantly if your paper meets the required cognitive ratios set by the Department of Basic Education (DBE).

- Automated Moderation: The entire pre-assessment moderation task, which consumes countless HOD hours, is automated. The tool generates a report that serves as your moderation evidence, verifying curriculum compliance and cognitive balance before the paper is ever printed. This is proactive quality assurance, not reactive damage control.

- Pinpoint Specific Student Learning Gaps: Once you've captured learner marks (a quick and easy process on the platform), the tool generates powerful visual reports. It shows you exactly which questions, topics, and cognitive skills were the biggest barriers to success for your class, for specific groups of learners, or even for individuals. You can finally see the difference between a student who doesn't know the content and a student who can't apply it.

A Practical Example: Transforming a Grade 11 Physical Sciences Paper

Imagine you are an HOD for Physical Sciences. A teacher submits a Physics Paper 1 for moderation.

- The Old Way: You spend two hours reading the paper, manually creating a grid, and debating cognitive levels. You suspect it's a bit heavy on recall questions, but you can't be sure without a lengthy calculation.

- The SA Teachers Way: The teacher uploads the paper to the Exam Diagnostic tool. Five minutes later, you both look at the report. It clearly shows:

- Cognitive Imbalance: The paper is 65% lower-order questions, far from the required 40%.

- Topic Drift: The "Newton's Laws" section makes up 50% of the marks, while "Momentum and Impulse" is under-represented.

- Action: You can now give the teacher precise, data-backed feedback. "We need to convert two of the 'define' questions in the Newton's section into 'application' or 'analysis' questions, and add an 8-mark question on impulse to balance the paper."

The paper is fixed before it's written. After the exam, the data reveals that 80% of learners failed the single "Evaluation" question. Your departmental intervention is now crystal clear: you don't need to re-teach the entire syllabus; you need to run a targeted workshop on higher-order thinking skills. This is the power of moving from guessing to knowing.

Conclusion: Stop Guessing, Start Teaching with Precision

As we advance towards 2026, the call for data-informed instruction will only grow louder. The pressure to close student learning gaps and ensure every learner is prepared for their future requires a more intelligent, efficient, and precise approach to assessment.

Continuing with the old methods of surface-level analysis is no longer an option. It's inefficient, ineffective, and unfair to both teachers and learners. It leaves dedicated educators feeling overwhelmed and learners' needs misunderstood.

The future of effective education in South Africa lies in leveraging technology to do the heavy lifting, freeing up teachers to do what they do best: teach. The SA Teachers Exam Diagnostic tool is more than just software; it's a partner in pedagogical excellence. It ends the guesswork, eliminates administrative burdens, and provides the clear, actionable insights needed to drive real improvement.

Don't spend another term trying to interpret a blurry picture. It's time to get the high-resolution blueprint of your students' learning.

Take the first step towards transforming your assessment strategy. Explore the SA Teachers Exam Diagnostic tool today and start knowing, not guessing.

Andile M.

Dedicated to empowering South African teachers through modern AI strategies, research-backed pedagogy, and policy insights.