Decoding Student Performance: A South African Teacher's Guide to Reading a Diagnostic Analysis Report

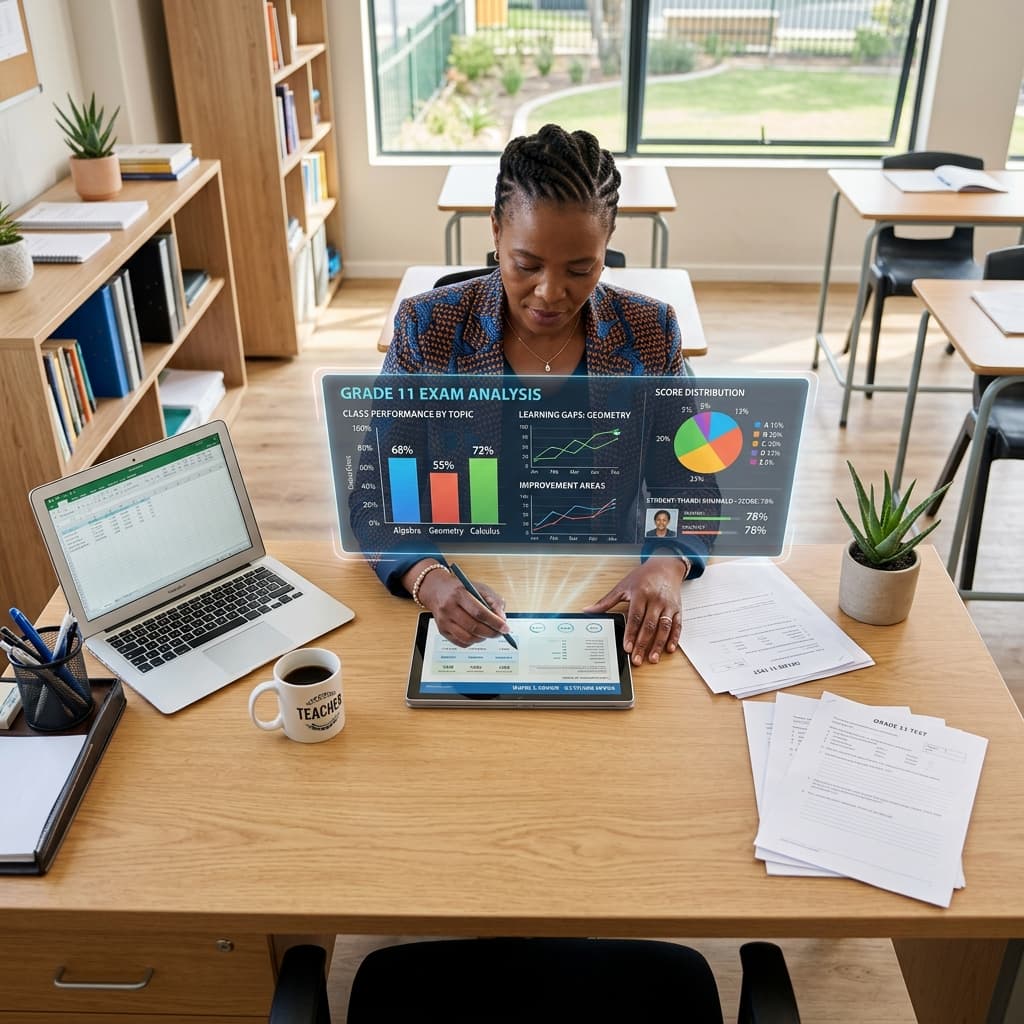

The final bell rings, but for South African teachers and Heads of Department (HODs), the work is far from over. A towering stack of marked scripts sits on the desk, each one representing a learner's effort, understanding, and struggle. The marks are in, the spreadsheet is populated, but what does it all mean? A 65% in Mathematics or a 58% in Life Sciences is just a number. The real gold lies hidden beneath that percentage—in the patterns, the common errors, and the specific learning gaps. This is where a diagnostic analysis becomes a teacher's most powerful tool.

For too long, we've been trapped in a cycle of summative assessment—test, mark, report, repeat. But to truly move the needle on academic performance and meet the rigorous demands of the CAPS curriculum, we need to shift our focus from merely measuring to actively diagnosing. This comprehensive guide is designed for South African teachers, HODs, and school management teams. We will unpack how to read, interpret, and, most importantly, act on the data from a diagnostic analysis report to foster meaningful learning and drive student success.

What Exactly is a Diagnostic Analysis? Beyond the Mark Sheet

A standard mark sheet tells you what a student scored. A diagnostic analysis report tells you why. It is a detailed, granular breakdown of learner and class performance in an assessment, moving far beyond a single percentage point. It is a formative tool at its core, designed to inform and guide future teaching and learning strategies.

In the context of South African education, a proper exam diagnostic serves several critical functions:

- Identifies Student Learning Gaps: It pinpoints the exact concepts, topics, and skills within the CAPS framework where learners are struggling. It’s the difference between knowing a student is weak in "Algebra" and knowing they specifically struggle with "factorising trinomials."

- Evaluates Teaching Effectiveness: It provides honest, data-driven feedback on which teaching methods are landing and which concepts need to be revisited or taught differently.

- Assesses Cognitive Demand: It analyses performance across different cognitive levels, as outlined by Bloom's Taxonomy, which is a cornerstone of CAPS assessment policy.

- Informs Intervention Strategies: It provides the necessary evidence to design targeted support for individual learners, small groups, and the entire class.

Essentially, a diagnostic analysis transforms a test from a final judgment into a starting point for improvement.

The Core Components of a Comprehensive Exam Diagnostic Report

A truly useful diagnostic report is rich with data, organised logically to tell a clear story about student performance. When you receive or generate one, these are the key components you should be looking for.

Overall Learner and Class Performance

This is your 30,000-foot view. It provides the initial context before you drill down into the details.

- Average (Mean): The total marks of all learners divided by the number of learners. This gives you a general sense of the class's central tendency.

- Median: The middle score when all scores are listed in order. This is often more useful than the average, as it isn't skewed by a few very high or very low performers.

- Mode: The most frequently occurring score.

- Range: The difference between the highest and lowest scores. A very wide range can indicate a significant diversity in understanding and ability within the class.

SA Context: In a large, diverse South African classroom, the range and median are often more telling than the average. They can quickly highlight whether you have a cohesive group or a class with significant polarisation in performance.

Topic-by-Topic Breakdown (CAPS Alignment)

This is where the analysis becomes truly powerful for a CAPS-focused educator. The report should break down performance according to the specific topics and sub-topics prescribed in the CAPS assessment guidelines for that subject and grade.

Example (Grade 10 Mathematics Paper 1):

- Algebraic Expressions: 72% Average

- Exponents: 65% Average

- Number Patterns: 81% Average

- Equations and Inequalities: 49% Average

- Trigonometry: 58% Average

Immediately, you can see that "Equations and Inequalities" is a major problem area for the class as a whole. This is a clear, actionable insight. Your diagnostic analysis has flagged a specific content area that requires immediate attention.

Cognitive Level Analysis (Bloom's Taxonomy)

The CAPS document is explicit about setting assessments that test a range of cognitive skills, from basic recall to complex evaluation. A robust exam diagnostic must analyse performance against these levels. The typical breakdown for CAPS includes:

- Level 1: Knowledge (Remembering): Recalling facts, terms, basic concepts. (e.g., "Define photosynthesis.")

- Level 2: Comprehension (Understanding): Explaining ideas or concepts. (e.g., "Explain the process of photosynthesis in your own words.")

- Level 3: Application (Applying): Using information in new situations. (e.g., "Given this data, calculate the rate of photosynthesis.")

- Level 4: Analysis/Synthesis/Evaluation (Higher-Order Thinking): Drawing connections, justifying a stance, creating new ideas. (e.g., "Evaluate the impact of deforestation on the rate of global photosynthesis and justify your answer.")

Your report should show the average score for questions at each cognitive level. It’s very common to see high scores for Level 1 and 2 questions, with a dramatic drop-off at Levels 3 and 4. This indicates that learners can memorise information but struggle to apply it or think critically—a critical student learning gap.

Question-by-Question Analysis

The most granular level of a diagnostic analysis. This section lists every single question from the assessment and shows the percentage of learners who answered it correctly.

- High-Performing Questions (>80% correct): These validate that the concept was taught and understood effectively.

- Average-Performing Questions (50-80% correct): These topics are generally understood but may have nuances that some learners missed.

- Low-Performing Questions (<50% correct): These are your red flags. These questions require deep investigation. Was the concept not understood? Was the wording of the question confusing? Was the cognitive demand too high?

By cross-referencing a low-performing question with its CAPS topic and Bloom's level, you can achieve an incredible level of diagnostic precision.

A Practical, Step-by-Step Guide to Interpreting Your Diagnostic Analysis

Data can be overwhelming. Follow this structured approach to make sense of your report and extract actionable insights.

Step 1: Start with the Macro View (The Forest) Look at the overall class statistics. Is the average where you expected it to be based on your professional judgment? Is the performance distribution a standard bell curve, or is it skewed? This sets the scene.

Step 2: Drill Down into CAPS Topics (The Trees) Move to the topic-by-topic breakdown. Identify the top 2-3 strongest topics and the bottom 2-3 weakest topics. This is your first major priority list for intervention and revision.

Step 3: Scrutinise the Cognitive Levels (The Roots) Analyse the Bloom's Taxonomy performance. Is there a clear "glass ceiling" where performance drops? For example, if learners ace Remembering and Understanding questions but fail at Applying, you know the issue isn't a lack of knowledge, but a lack of ability to use that knowledge in a practical context. This tells you how to plan your intervention—focus on problem-solving and application-based activities, not more content cramming.

Step 4: Pinpoint Problematic Questions (The Specific Leaves) Now, look at the question-by-question analysis. Focus on the questions where less than 50% of the class got the correct answer. For each of these red-flag questions, ask yourself:

- Content: What specific CAPS concept did this question assess?

- Cognitive Skill: What was the Bloom's level? Was it a higher-order question?

- Common Error: Can I identify a pattern in the incorrect answers? Did everyone make the same conceptual mistake? Did they misinterpret the question?

Step 5: Synthesise and Formulate an Action Plan This is the most critical step. Connect the dots from your analysis.

- Insight: "The class average for 'Financial Mathematics' was only 45%. The question-by-question analysis shows they specifically failed on Question 5.3, an 'Application' level question about compound interest."

- Action Plan: "I will dedicate two lessons next week to re-teaching compound interest, focusing specifically on word problems and real-world scenarios to bridge the gap from theory to application. I will then give them a short quiz with similar application-style questions to assess if the gap has been closed."

From Data to Action: Translating Insights into Classroom Strategy

A diagnostic analysis is useless if it remains a file on your laptop. Its value is realised when it informs your teaching.

For Whole-Class Intervention

If the data shows that over 60% of the class struggled with a specific CAPS topic or cognitive skill, it warrants a whole-class intervention. This could be:

- Re-teaching the concept using a different methodology (e.g., a practical demonstration instead of a lecture).

- Providing more scaffolding and worked examples.

- Administering a low-stakes follow-up quiz to check for improvement.

For Small Group Support

The analysis will likely reveal smaller clusters of students with shared student learning gaps. You can use this data to form temporary, targeted support groups. For example, you can pull aside the 5-6 learners who all struggled with analysing political cartoons in History for a 20-minute focused session.

For Future Planning and Assessment Design

Your findings are invaluable for future planning.

- Pacing: You may realise you need to adjust your ATP (Annual Teaching Plan) to spend more time on demonstrably difficult topics.

- Assessment Quality: The analysis also holds a mirror up to your assessment. Was it well-designed? Did it have an appropriate balance of cognitive levels as per CAPS assessment guidelines? Did it accurately cover the scope of the curriculum?

The Challenge: The Crushing Reality of Manual Diagnostic Analysis

Everything described above is the gold standard. But let's be honest. The reality for a South African teacher or HOD is a crippling workload. Manually creating a detailed exam diagnostic for a single test can take an entire weekend. The process involves:

- Creating a complex spreadsheet.

- Manually tagging every single question to a CAPS topic and sub-topic.

- Manually assigning a Bloom's Taxonomy level to each question—a subjective and often debated task.

- Entering every learner's mark for every single question.

- Wrestling with formulas to calculate all the averages and percentages.

This monumental administrative burden means that, despite its immense value, proper diagnostic analysis is often skipped, rushed, or done superficially. Teachers simply do not have the time.

The Solution: Automating Your Exam Diagnostics with SA Teachers

This is where technology provides a revolutionary solution, built specifically for the South African educational landscape. The SA Teachers platform has developed a cutting-edge, automated Exam Diagnostic tool that eliminates the manual drudgery and empowers educators with instant, deep insights.

Imagine a world where you can transform that mountain of marking into a precise, actionable report in minutes, not days. That's the power of the SA Teachers Exam Diagnostic tool.

Here’s how it works and why it's a game-changer for any school serious about data-driven education:

Effortless Upload: You simply upload your question paper and memo—it can be a Word document, a PDF, or even just pasted text. There's no complex setup.

AI-Powered Analysis: The tool’s advanced AI instantly gets to work. It reads and understands each question on your paper.

Automated Cognitive Level Tagging: Forget arguing about Bloom's levels. The tool automatically analyses the language and structure of each question and assigns a cognitive level (Knowledge, Comprehension, Application, etc.) with high accuracy, perfectly aligned with DBE and CAPS assessment requirements.

Verifies CAPS Coverage: The AI cross-references your assessment against the official CAPS curriculum for your grade and subject. It instantly shows you your topic coverage, highlighting any areas you may have over-assessed or, more critically, missed entirely.

Identifies Topic Drift: It can even detect "topic drift"—instances where a question, intended to assess one topic, might unintentionally be assessing another, confusing learners and skewing your data.

Automates the Moderator Task: For HODs and subject heads, this is revolutionary. The entire manual process of moderating an exam for cognitive balance and CAPS coverage is automated. It generates the moderation report for you, saving countless hours and ensuring consistency and quality across the department.

By automating the entire diagnostic analysis process, SA Teachers frees you from the administrative nightmare and allows you to focus on what you do best: teaching. It puts the power of a data analyst directly into the hands of every teacher, enabling you to identify student learning gaps with precision and design interventions that make a real difference.

Conclusion: Embrace Data-Driven Teaching with Confidence

Reading a diagnostic analysis report is no longer a dark art reserved for data scientists. It is an essential skill for the modern South African educator committed to excellence. By moving beyond the final mark and diving into the rich data on topic performance, cognitive skills, and specific learner errors, you can unlock a new level of teaching effectiveness.

The barrier has always been time. The manual process was too cumbersome, the administrative load too heavy. But with innovative tools like the SA Teachers Exam Diagnostic, that barrier has been shattered. You can now generate deep, insightful, and actionable reports with a few clicks.

Stop drowning in spreadsheets and start teaching with precision. Empower yourself with the data you need to close learning gaps, challenge your high-flyers, and ensure every single learner in your classroom has the opportunity to achieve their full potential. The future of South African education is data-informed, and it's more accessible than ever before.

Siyanda M.

Dedicated to empowering South African teachers through modern AI strategies, research-backed pedagogy, and policy insights.